There were plenty of resources regarding the use of postMessage here and there, however, none focused on reproducing the bugs locally. Just “copy this line in the iframe, this line in the page hosting the iframe and everything will work.”

I had the same issue and wanted to fix it and make sure it was running without long build, deploys etc

Here we will start from scratch, using python to serve pages locally. First we will serve two pages on the same port, then ensure postMessage between these pages works, break it with serving it on different ports and finally fix the iframe communication.

Note that I do not focus on the origin of the event checks below, but developer.mozilla.org does.

Sending data to a parent frame with postMessage

Step 1 : creating and serving two pages

Let’s start with serving a page, containing an iframe locally, at the same address. For this you will need a web browser, and a working python distribution. In what follows, everything will be served on port 4000.

index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>I am the main page</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the main page</h1>

<iframe id="iframe" src="http://localhost:4000/iframe.html" height="600" width="800">

</iframe>

</body>

</html>

iframe.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Iframe</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the iframe</h1>

<p>I should be in the site</p>

</body>

</html>

Side note, I remembered that serving a page locally involved a “python http.server”. The following trick should be known by everybody using terminal, if you want to avoid googling again and again ;)

user@user:~$ history | grep http.se

1283 python3 -m http.server 4000

[...]

The command I was looking for was:

python3 -m http.server 4000

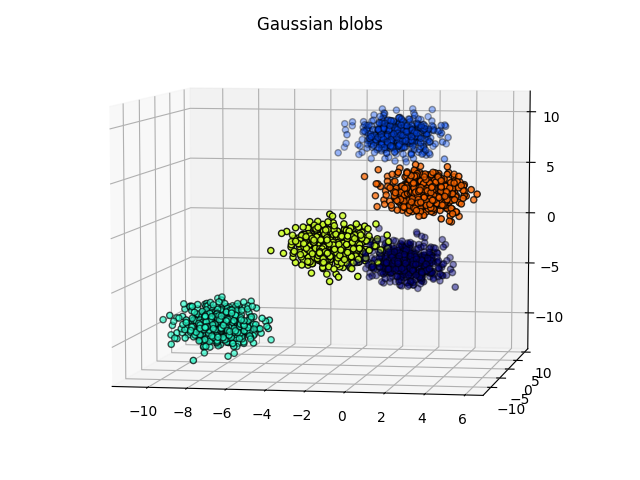

Opening localhost in your browser should look like…

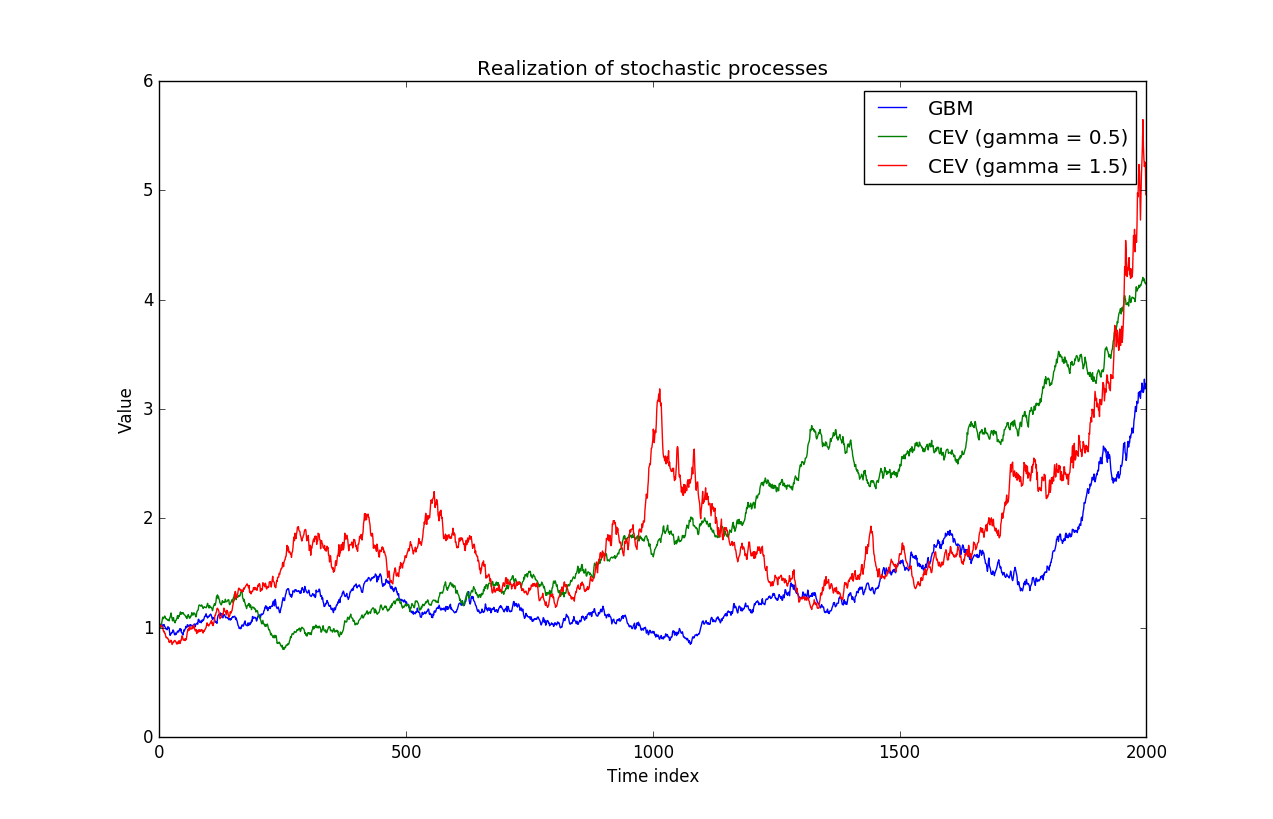

Step 2 : postMessage to parent frame

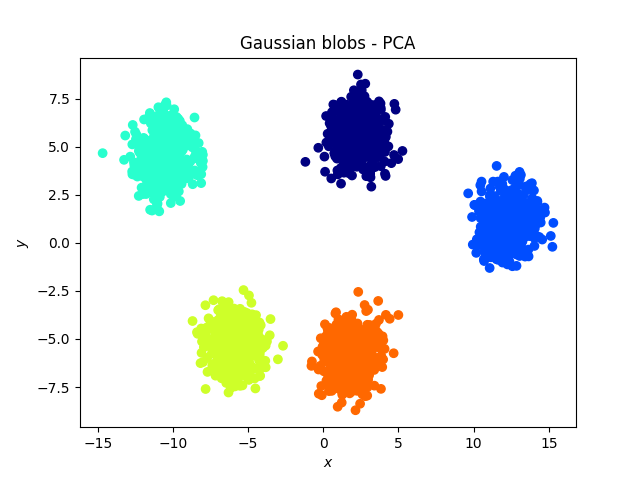

Let’s add some javascript in the pages so that the iframe can send data to the parent frame. Basically, what happens below is that the iframe manages to run a function located in the parent window.

You can check it by clicking the button once the pages are served.

index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>I am the main page</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the main page</h1>

<iframe id="iframe" src="http://localhost:4000/iframe.html" height="600" width="800">

</iframe>

</body>

</html>

iframe.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Iframe</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the iframe</h1>

<p>I should be in the site</p>

<button id="my-btn">Send to parent</button>

</body>

</html>

<script>

document.getElementById('my-btn').addEventListener('click', handleButtonClick, false);

function handleButtonClick(e) {

window.parent.postMessage('message');

console.log("Button clicked in the frame");

}

</script>

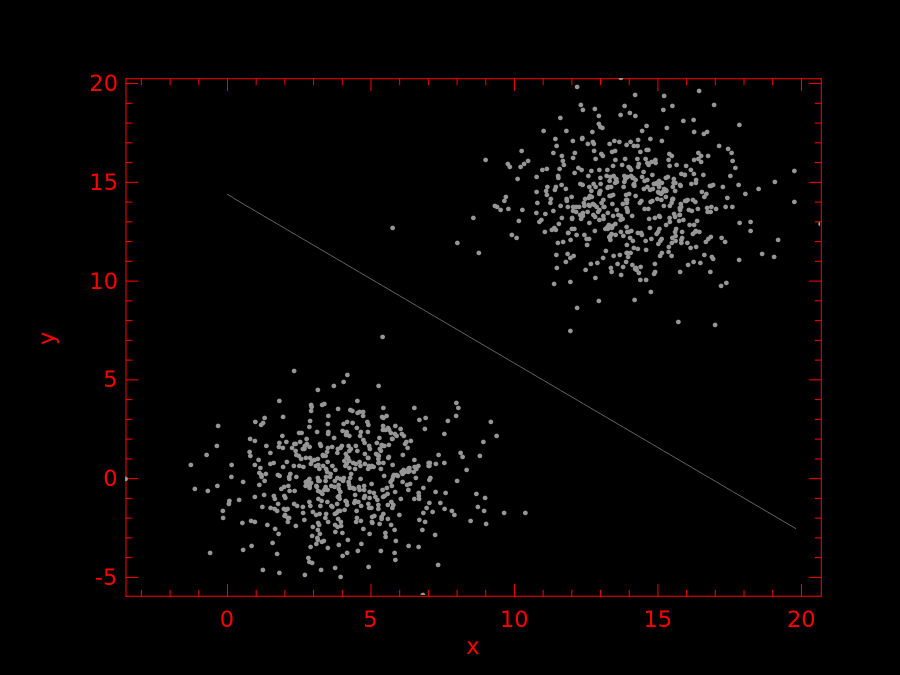

Step 2: emulating cross domain, locally

Triggering the error

Now let’s try to serve the frame and the site on “different domains”. For this, we need to move the pages in different folders, so that it looks like:

.

├── iframe

│ └── index.html

└── index

└── index.html

We need to run two instances of http.serve, the main page will be served on port 4000 and the iframe on port 4200.

user@user:~/Codes/iframe-communication/index$ python3 -m http.server 4000

and

user@user:~/Codes/iframe-communication/iframe$ python3 -m http.server 4200

While the contents of the pages will be (note that the port to the iframe has to be changed):

index/index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>I am the main page</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the main page</h1>

<iframe id="iframe" src="http://localhost:4200" height="600" width="800">

</iframe>

</body>

</html>

<script>

window.addEventListener('message', function() {

alert("Message received");

console.log("message received");

}, false);

</script>

iframe/index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Iframe</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the iframe</h1>

<p>I should be in the site</p>

<button id="my-btn">Send to parent</button>

</body>

</html>

<script>

document.getElementById('my-btn').addEventListener('click', handleButtonClick, false);

function handleButtonClick(e) {

window.parent.postMessage('message');

console.log("Button clicked in the frame");

}

</script>

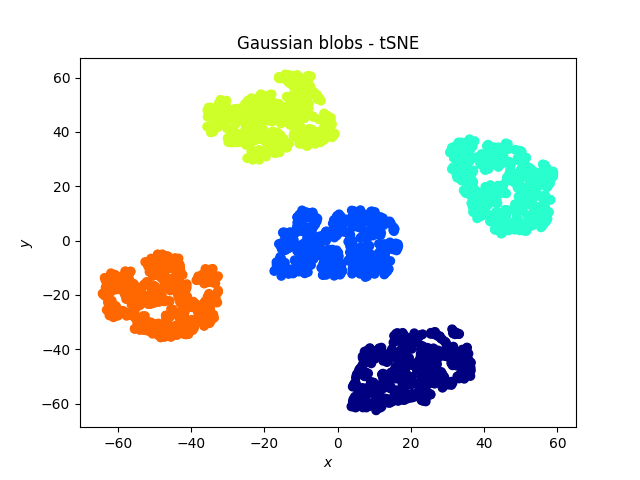

Clicking on the button, you should see the following error in your console:

(index):24 Failed to execute 'postMessage' on 'DOMWindow':

The target origin provided ('null') does not match the recipient

window's origin ('http://localhost:4000').

Fixing the error

The message is clear enough, there is a missing argument : the iframe should now the address of the parent.

Writing the following:

index/index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>I am the main page</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the main page</h1>

<iframe id="iframe" src="http://localhost:4200" height="600" width="800">

</iframe>

</body>

</html>

<script>

window.addEventListener('message', function() {

alert("Message received");

console.log("message received");

}, false);

</script>

iframe/index.html

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Iframe</title>

<meta name="description" content="Iframe communication tutorial">

<meta name="author" content="TheKernelTrip">

</head>

<body>

<h1>I am the iframe</h1>

<p>I should be in the site</p>

<button id="my-btn">Send to parent</button>

</body>

</html>

<script>

document.getElementById('my-btn').addEventListener('click', handleButtonClick, false);

function handleButtonClick(e) {

window.parent.postMessage('message', "http://localhost:4000");

console.log("Button clicked in the frame");

}

</script>